The TPSPLINE Procedure

Computational Formulas

The theoretical foundations for the thin-plate smoothing spline are described in Duchon (1976, 1977) and Meinguet (1979). Further results and applications are given in: Wahba and Wendelberger (1980); Hutchinson and Bischof (1983); Seaman and Hutchinson (1985).

Suppose that  is a space of functions whose partial derivatives of total order m are in

is a space of functions whose partial derivatives of total order m are in  , where

, where  is the domain of

is the domain of  .

.

Now, consider the data model

![\[ y_ i = f(\mb{x}_ i)+\epsilon _ i, i=1, \dots , n \]](images/statug_tpspline0068.png)

where  .

.

Using the notation from the section Penalized Least Squares Estimation, for a fixed  , estimate f by minimizing the penalized least squares function

, estimate f by minimizing the penalized least squares function

![\[ \frac{1}{n} \sum ^ n_{i=1}(y_ i-f({\mb{x}}_ i)-{\mb{z}}_ i{\bbeta })^2 + \lambda J_ m(f) \]](images/statug_tpspline0070.png)

is the penalty term to enforce smoothness on f. There are several ways to define

is the penalty term to enforce smoothness on f. There are several ways to define  . For the thin-plate smoothing spline, with

. For the thin-plate smoothing spline, with  of dimension d, define

of dimension d, define  as

as

![\[ J_ m(f)= \int _{-\infty }^{\infty } \cdots \int _{-\infty }^{\infty } \sum \frac{m!}{\alpha _1!\cdots \alpha _ d !} \left( \begin{array}{c} \frac{\partial ^ m f}{\partial {x_1}^{\alpha _1}\cdots \partial {x_ d}^{\alpha _ d}} \end{array}\right)^2 \mr{d}x_1\cdots \mr{d}x_ d \]](images/statug_tpspline0074.png)

where  . Under this definition,

. Under this definition,  gives zero penalty to some functions. The space that is spanned by the set of polynomials that contribute zero penalty is

called the polynomial space. The dimension of the polynomial space M is a function of dimension d and order m of the smoothing penalty,

gives zero penalty to some functions. The space that is spanned by the set of polynomials that contribute zero penalty is

called the polynomial space. The dimension of the polynomial space M is a function of dimension d and order m of the smoothing penalty,  .

.

Given the condition that  , the function that minimizes the penalized least squares criterion has the form

, the function that minimizes the penalized least squares criterion has the form

![\[ \hat{f}(\mb{x})=\sum _{j=1}^ M\theta _ j\phi _ j(\mb{x})+ \sum _{i=1}^ n\delta _ i\eta _{md}(\| \mb{x}-\mb{x}_ i\| ) \]](images/statug_tpspline0078.png)

where  and

and  are vectors of coefficients to be estimated. The M functions

are vectors of coefficients to be estimated. The M functions  are linearly independent polynomials that span the space of functions for which

are linearly independent polynomials that span the space of functions for which  is zero. The basis functions

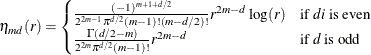

is zero. The basis functions  are defined as

are defined as

When d = 2 and m = 2, then  ,

,  ,

,  , and

, and  .

.  is as follows:

is as follows:

![\[ J_2(f)=\int _{-\infty } ^{\infty } \int _{-\infty }^{\infty } \left(\left( \begin{array}{c} \frac{\partial ^2 f}{\partial {x_1}^2} \end{array}\right)^2 +2 \left( \begin{array}{c} \frac{\partial ^2 f}{\partial {x_1} \partial {x_2}} \end{array}\right)^2 + \left( \begin{array}{c} \frac{\partial ^2 f}{\partial {x_2}^2} \end{array}\right)^2\right) \mr{d}x_1\mr{d}x_2 \]](images/statug_tpspline0088.png)

For the sake of simplicity, the formulas and equations that follow assume m = 2. See Wahba (1990) and Bates et al. (1987) for more details.

Duchon (1976) showed that  can be represented as

can be represented as

![\[ f_\lambda (\mb{x}_ i)=\theta _0+\sum _{j=1}^{d} \theta _ j {\mb{x}_{i}}_ j+ \sum _{j=1}^ n\delta _ j E_2(\mb{x}_ i-\mb{x}_ j) \]](images/statug_tpspline0089.png)

where  for d = 2. For derivations of

for d = 2. For derivations of  for other values of d, see Villalobos and Wahba (1987).

for other values of d, see Villalobos and Wahba (1987).

If you define  with elements

with elements  and

and  with elements

with elements  , the goal is to find vectors of coefficients

, the goal is to find vectors of coefficients  and

and  that minimize

that minimize

![\[ S_\lambda (\bbeta , \btheta ,\bdelta )= \frac{1}{n} \| \mb{y}-\mb{T}\btheta - \mb{K}\bdelta -\mb{Z}\bbeta \| ^2 +\lambda \bdelta ’ \mb{K} \bdelta \]](images/statug_tpspline0097.png)

A unique solution is guaranteed if the matrix  is of full rank and

is of full rank and  .

.

If  and

and  , the expression for

, the expression for  becomes

becomes

![\[ \frac{1}{n}\| \mb{y}-\mb{X}\balpha - \mb{K}\bdelta \| ^2 +\lambda \bdelta ’ \mb{K} \bdelta \]](images/statug_tpspline0103.png)

The coefficients  and

and  can be obtained by solving

can be obtained by solving

![\[ \left. \begin{array}{rcl} (\mb{K}+n\lambda \mb{I}_ n) \bdelta +\mb{X}\balpha & = & \mb{y} \\ \mb{X}’ \bdelta & = & \mb{0} \end{array} \right. \]](images/statug_tpspline0105.png)

To compute  and

and  , let the QR decomposition of

, let the QR decomposition of  be

be

![\[ \mb{X} = (\mb{Q}_1 ~ \mb{Q}_2) \left( \begin{array}{c} \mb{R} \\ \mb{0} \end{array} \right) \]](images/statug_tpspline0107.png)

where  is an orthogonal matrix and

is an orthogonal matrix and  is an upper triangular, with

is an upper triangular, with  (Dongarra et al. 1979).

(Dongarra et al. 1979).

Since  ,

,  must be in the column space of

must be in the column space of  . Therefore,

. Therefore,  can be expressed as

can be expressed as  for a vector

for a vector  . Substituting

. Substituting  into the preceding equation and multiplying through by

into the preceding equation and multiplying through by  gives

gives

![\[ \mb{Q}_2 ’ (\mb{K}+n\lambda \mb{I})\mb{Q}_2 \bgamma = \mb{Q}_2’ \mb{y} \]](images/statug_tpspline0117.png)

or

![\[ \bdelta = \mb{Q}_2 \bgamma = \mb{Q}_2[\mb{Q}_2’(\mb{K}+n\lambda \mb{I})\mb{Q}_2]^{-1} \mb{Q}_2’ \mb{y} \]](images/statug_tpspline0118.png)

The coefficient  can be obtained by solving

can be obtained by solving

![\[ \mb{R}\balpha = \mb{Q}_1’[ \mb{y}- (\mb{K}+n\lambda \mb{I})\bdelta ] \]](images/statug_tpspline0119.png)

The influence matrix  is defined as

is defined as

![\[ \hat{\mb{y}}=\mb{A}(\lambda ) \mb{y} \]](images/statug_tpspline0120.png)

and has the form

![\[ \mb{A}(\lambda ) = \mb{I} - n\lambda \mb{Q}_2 [\mb{Q}_2’(\mb{K}+n\lambda \mb{I})\mb{Q}_2]^{-1} \mb{Q}_2’ \]](images/statug_tpspline0121.png)

Similar to the regression case, if you consider the trace of  as the degrees of freedom for the model and the trace of

as the degrees of freedom for the model and the trace of  as the degrees of freedom for the error, the estimate

as the degrees of freedom for the error, the estimate  can be represented as

can be represented as

![\[ \hat{\sigma }^2 = \frac{\mr{RSS}(\lambda )}{\mr{tr}(\mb{I} - \mb{A}(\lambda ))} \]](images/statug_tpspline0125.png)

where  is the residual sum of squares. Theoretical properties of these estimates have not yet been published. However, good numerical

results in simulation studies have been described by several authors. For more information, see O’Sullivan and Wong (1987); Nychka (1986a, 1986b, 1988); Hall and Titterington (1987).

is the residual sum of squares. Theoretical properties of these estimates have not yet been published. However, good numerical

results in simulation studies have been described by several authors. For more information, see O’Sullivan and Wong (1987); Nychka (1986a, 1986b, 1988); Hall and Titterington (1987).

Confidence Intervals

Viewing the spline model as a Bayesian model, Wahba (1983) proposed Bayesian confidence intervals for smoothing spline estimates as

![\[ \hat{f}_\lambda (\mb{x}_ i) \pm z_{\alpha /2} \sqrt {\hat{\sigma }^2 a_{ii}(\lambda )} \]](images/statug_tpspline0127.png)

where  is the ith diagonal element of the

is the ith diagonal element of the  matrix and

matrix and  is the

is the  quantile of the standard normal distribution. The confidence intervals are interpreted as intervals "across the function"

as opposed to pointwise intervals.

quantile of the standard normal distribution. The confidence intervals are interpreted as intervals "across the function"

as opposed to pointwise intervals.

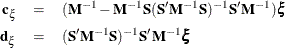

For SCORE data sets, the hat matrix  is not available. To compute the Bayesian confidence interval for a new point

is not available. To compute the Bayesian confidence interval for a new point  , let

, let

![\[ \mb{S}=\mb{X}, ~ \mb{M}=\mb{K}+n\lambda \mb{I} \]](images/statug_tpspline0132.png)

and let  be an

be an  vector with ith entry

vector with ith entry

![\[ \eta _{md}(\| \mb{x}_{\mathrm{new}}-\mb{x}_ i\| ) \]](images/statug_tpspline0135.png)

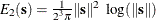

When d = 2 and m = 2,  is computed with

is computed with

![\[ E_2(\mb{x}_ i-\mb{x}_{\mathrm{new}})= \frac{1}{2^3\pi }\| \mb{x}_ i-\mb{x}_{\mathrm{new}}\| ^2 \log (\| \mb{x}_ i-\mb{x}_{\mathrm{new}}\| ) \]](images/statug_tpspline0137.png)

is a vector of evaluations of

is a vector of evaluations of  by the polynomials that span the functional space where

by the polynomials that span the functional space where  is zero. The details for

is zero. The details for  ,

,  , and

, and  are discussed in the previous section. Wahba (1983) showed that the Bayesian posterior variance of

are discussed in the previous section. Wahba (1983) showed that the Bayesian posterior variance of  satisfies

satisfies

![\[ n\lambda \mathrm{Var}(\mb{x}_{\mathrm{new}}) = \bphi ’(\mb{S}’\mb{M}^{-1}\mb{S})^{-1}\bphi - 2\bphi ’\mb{d}_{\xi } - \bxi ’\mb{c}_{\xi } \]](images/statug_tpspline0141.png)

where

Suppose that you fit a spline estimate that consists of a true function f and a random error term  to experimental data. In repeated experiments, it is likely that about

to experimental data. In repeated experiments, it is likely that about  of the confidence intervals cover the corresponding true values, although some values are covered every time and other values

are not covered by the confidence intervals most of the time. This effect is more pronounced when the true surface or surface

has small regions of particularly rapid change.

of the confidence intervals cover the corresponding true values, although some values are covered every time and other values

are not covered by the confidence intervals most of the time. This effect is more pronounced when the true surface or surface

has small regions of particularly rapid change.

Smoothing Parameter

The quantity  is called the smoothing parameter, which controls the balance between the goodness of fit and the smoothness of the final

estimate.

is called the smoothing parameter, which controls the balance between the goodness of fit and the smoothness of the final

estimate.

A large  heavily penalizes the mth derivative of the function, thus forcing

heavily penalizes the mth derivative of the function, thus forcing  close to 0. A small

close to 0. A small  places less of a penalty on rapid change in

places less of a penalty on rapid change in  , resulting in an estimate that tends to interpolate the data points.

, resulting in an estimate that tends to interpolate the data points.

The smoothing parameter greatly affects the analysis, and it should be selected with care. One method is to perform several

analyses with different values for  and compare the resulting final estimates.

and compare the resulting final estimates.

A more objective way to select the smoothing parameter  is to use the "leave-out-one" cross validation function, which is an approximation of the predicted mean squares error. A

generalized version of the leave-out-one cross validation function is proposed by Wahba (1990) and is easy to calculate. This generalized cross validation (GCV) function is defined as

is to use the "leave-out-one" cross validation function, which is an approximation of the predicted mean squares error. A

generalized version of the leave-out-one cross validation function is proposed by Wahba (1990) and is easy to calculate. This generalized cross validation (GCV) function is defined as

![\[ \mbox{GCV}(\lambda )=\frac{(1/n)\| ({\mb{I}}-{\mb{A}}(\lambda )){\mb{y}}\| ^2}{[(1/n)\mr{tr}({\mb{I}}-{\mb{A}}(\lambda ))]^2} \]](images/statug_tpspline0029.png)

The justification for using the GCV function to select  relies on asymptotic theory. Thus, you cannot expect good results for very small sample sizes or when there is not enough

information in the data to separate the model from the error component. Simulation studies suggest that for independent and

identically distributed Gaussian noise, you can obtain reliable estimates of

relies on asymptotic theory. Thus, you cannot expect good results for very small sample sizes or when there is not enough

information in the data to separate the model from the error component. Simulation studies suggest that for independent and

identically distributed Gaussian noise, you can obtain reliable estimates of  for n greater than 25 or 30. Note that, even for large values of n (say,

for n greater than 25 or 30. Note that, even for large values of n (say,  ), in extreme Monte Carlo simulations there might be a small percentage of unwarranted extreme estimates in which

), in extreme Monte Carlo simulations there might be a small percentage of unwarranted extreme estimates in which  or

or  (Wahba 1983). Generally, if

(Wahba 1983). Generally, if  is known to within an order of magnitude, the occasional extreme case can be readily identified. As n gets larger, the effect becomes weaker.

is known to within an order of magnitude, the occasional extreme case can be readily identified. As n gets larger, the effect becomes weaker.

The GCV function is fairly robust against nonhomogeneity of variances and non-Gaussian errors (Villalobos and Wahba 1987). Andrews (1988) has provided favorable theoretical results when variances are unequal. However, this selection method is likely to give unsatisfactory results when the errors are highly correlated.

The GCV value might be suspect when  is extremely small because computed values might become indistinguishable from zero. In practice, calculations with

is extremely small because computed values might become indistinguishable from zero. In practice, calculations with  or

or  near 0 can cause numerical instabilities that result in an unsatisfactory solution. Simulation studies have shown that a

near 0 can cause numerical instabilities that result in an unsatisfactory solution. Simulation studies have shown that a

with

with  is small enough that the final estimate based on this

is small enough that the final estimate based on this  almost interpolates the data points. A GCV value based on a

almost interpolates the data points. A GCV value based on a  might not be accurate.

might not be accurate.