The AUTOREG Procedure

- Overview

-

Getting Started

-

Syntax

-

Details

Missing ValuesAutoregressive Error ModelAlternative Autocorrelation Correction MethodsGARCH ModelsHeteroscedasticity- and Autocorrelation-Consistent Covariance Matrix EstimatorGoodness-of-Fit Measures and Information CriteriaTestingPredicted ValuesOUT= Data SetOUTEST= Data SetPrinted OutputODS Table NamesODS Graphics

Missing ValuesAutoregressive Error ModelAlternative Autocorrelation Correction MethodsGARCH ModelsHeteroscedasticity- and Autocorrelation-Consistent Covariance Matrix EstimatorGoodness-of-Fit Measures and Information CriteriaTestingPredicted ValuesOUT= Data SetOUTEST= Data SetPrinted OutputODS Table NamesODS Graphics -

Examples

- References

Heteroscedasticity- and Autocorrelation-Consistent Covariance Matrix Estimator

The heteroscedasticity-consistent covariance matrix estimator (HCCME), also known as the sandwich (or robust or empirical) covariance matrix estimator, has been popular in recent years because it gives the consistent estimation of the covariance matrix of the parameter estimates even when the heteroscedasticity structure might be unknown or misspecified. White (1980) proposes the concept of HCCME, known as HC0. However, the small-sample performance of HC0 is not good in some cases. Davidson and MacKinnon (1993) introduce more improvements to HC0, namely HC1, HC2 and HC3, with the degrees-of-freedom or leverage adjustment. Cribari-Neto (2004) proposes HC4 for cases that have points of high leverage.

HCCME can be expressed in the following general “sandwich” form:

|

|

where ![]() , which stands for “bread,” is the Hessian matrix and

, which stands for “bread,” is the Hessian matrix and ![]() , which stands for “meat,” is the outer product of gradient (OPG) with or without adjustment. For HC0,

, which stands for “meat,” is the outer product of gradient (OPG) with or without adjustment. For HC0, ![]() is the OPG without adjustment; that is,

is the OPG without adjustment; that is,

|

|

where ![]() is the sample size and

is the sample size and ![]() is the gradient vector of

is the gradient vector of ![]() th observation. For HC1,

th observation. For HC1, ![]() is the OPG with the degrees-of-freedom correction; that is,

is the OPG with the degrees-of-freedom correction; that is,

|

|

where ![]() is the number of parameters. For HC2, HC3, and HC4, the adjustment is related to leverage, namely,

is the number of parameters. For HC2, HC3, and HC4, the adjustment is related to leverage, namely,

|

|

The leverage ![]() is defined as

is defined as ![]() , where

, where ![]() is defined as follows:

is defined as follows:

-

For an OLS model,

is the

is the  th observed regressors in column vector form.

th observed regressors in column vector form.

-

For an AR error model,

is the derivative vector of the

is the derivative vector of the  th residual with respect to the parameters.

th residual with respect to the parameters.

-

For a GARCH or heteroscedasticity model,

is the gradient of the

is the gradient of the  th observation (that is,

th observation (that is,  ).

).

The heteroscedasticity- and autocorrelation-consistent (HAC) covariance matrix estimator can also be expressed in “sandwich” form:

|

|

where ![]() is still the Hessian matrix, but

is still the Hessian matrix, but ![]() is the kernel estimator in the following form:

is the kernel estimator in the following form:

|

|

where ![]() is the sample size,

is the sample size, ![]() is the gradient vector of

is the gradient vector of ![]() th observation,

th observation, ![]() is the real-valued kernel function,

is the real-valued kernel function, ![]() is the bandwidth parameter, and

is the bandwidth parameter, and ![]() is the adjustment factor of small-sample degrees of freedom (that is,

is the adjustment factor of small-sample degrees of freedom (that is, ![]() if ADJUSTDF option is not specified and otherwise

if ADJUSTDF option is not specified and otherwise ![]() , where

, where ![]() is the number of parameters). The types of kernel functions are listed in Table 8.2.

is the number of parameters). The types of kernel functions are listed in Table 8.2.

Table 8.2: Kernel Functions

|

Kernel Name |

Equation |

|---|---|

|

Bartlett |

|

|

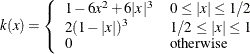

Parzen |

|

|

Quadratic spectral |

|

|

Truncated |

|

|

Tukey-Hanning |

|

When you specify BANDWIDTH=ANDREWS91, according to Andrews (1991) the bandwidth parameter is estimated as shown in Table 8.3.

Table 8.3: Bandwidth Parameter Estimation

|

Kernel Name |

Bandwidth Parameter |

|---|---|

|

Bartlett |

|

|

Parzen |

|

|

Quadratic spectral |

|

|

Truncated |

|

|

Tukey-Hanning |

|

Let ![]() denote each series in

denote each series in ![]() , and let

, and let ![]() denote the corresponding estimates of the autoregressive and innovation variance parameters of the AR(1) model on

denote the corresponding estimates of the autoregressive and innovation variance parameters of the AR(1) model on ![]() ,

, ![]() , where the AR(1) model is parameterized as

, where the AR(1) model is parameterized as ![]() with

with ![]() . The factors

. The factors ![]() and

and ![]() are estimated with the following formulas:

are estimated with the following formulas:

![\[ \alpha (1) = \frac{\sum _{a=1}^ k{\frac{4\rho _ a^2\sigma _ a^4}{(1-\rho _ a)^6(1+\rho _ a)^2}}}{\sum _{a=1}^ k{\frac{\sigma _ a^4}{(1-\rho _ a)^4}}} \\ \alpha (2) = \frac{\sum _{a=1}^ k{\frac{4\rho _ a^2\sigma _ a^4}{(1-\rho _ a)^8}}}{\sum _{a=1}^ k{\frac{\sigma _ a^4}{(1-\rho _ a)^4}}} \]](images/etsug_autoreg0401.png) |

When you specify BANDWIDTH=NEWEYWEST94, according to Newey and West (1994) the bandwidth parameter is estimated as shown in Table 8.4.

Table 8.4: Bandwidth Parameter Estimation

|

Kernel Name |

Bandwidth Parameter |

|---|---|

|

Bartlett |

|

|

Parzen |

|

|

Quadratic spectral |

|

|

Truncated |

|

|

Tukey-Hanning |

|

The factors ![]() and

and ![]() are estimated with the following formulas:

are estimated with the following formulas:

|

|

where ![]() is the lag selection parameter and is determined by kernels, as listed in Table 8.5.

is the lag selection parameter and is determined by kernels, as listed in Table 8.5.

Table 8.5: Lag Selection Parameter Estimation

|

Kernel Name |

Lag Selection Parameter |

|---|---|

|

Bartlett |

|

|

Parzen |

|

|

Quadratic spectral |

|

|

Truncated |

|

|

Tukey-Hanning |

|

The factor ![]() in Table 8.5 is specified by the C= option; by default it is 12.

in Table 8.5 is specified by the C= option; by default it is 12.

The factor ![]() is estimated with the equation

is estimated with the equation

|

|

where ![]() is 1 if the NOINT option in the MODEL statement is specified (otherwise, it is 2), and

is 1 if the NOINT option in the MODEL statement is specified (otherwise, it is 2), and ![]() is the same as in the Andrews method.

is the same as in the Andrews method.

If you specify BANDWIDTH=SAMPLESIZE, the bandwidth parameter is estimated with the equation

|

|

where ![]() is the sample size;

is the sample size; ![]() is the largest integer less than or equal to

is the largest integer less than or equal to ![]() ; and

; and ![]() ,

, ![]() , and

, and ![]() are values specified by the BANDWIDTH=SAMPLESIZE(GAMMA=, RATE=, CONSTANT=) options, respectively.

are values specified by the BANDWIDTH=SAMPLESIZE(GAMMA=, RATE=, CONSTANT=) options, respectively.

If you specify the PREWHITENING option, ![]() is prewhitened by the VAR(1) model,

is prewhitened by the VAR(1) model,

|

|

Then ![]() is calculated by

is calculated by

|

|

The bandwidth calculation is also based on the prewhitened series ![]() .

.