The NLMIXED Procedure

-

Overview

-

Getting Started

-

Syntax

-

Details

Modeling Assumptions and NotationIntegral ApproximationsBuilt-in Log-Likelihood FunctionsHierarchical Model SpecificationOptimization AlgorithmsFinite-Difference Approximations of DerivativesHessian ScalingActive Set MethodsLine-Search MethodsRestricting the Step LengthComputational ProblemsCovariance MatrixPredictionComputational ResourcesDisplayed OutputODS Table Names

Modeling Assumptions and NotationIntegral ApproximationsBuilt-in Log-Likelihood FunctionsHierarchical Model SpecificationOptimization AlgorithmsFinite-Difference Approximations of DerivativesHessian ScalingActive Set MethodsLine-Search MethodsRestricting the Step LengthComputational ProblemsCovariance MatrixPredictionComputational ResourcesDisplayed OutputODS Table Names -

Examples

- References

This example uses the pump failure data of Gaver and O’Muircheartaigh (1987). The number of failures and the time of operation are recorded for 10 pumps. Each of the pumps is classified into one of two groups corresponding to either continuous or intermittent operation. The data are as follows:

data pump; input y t group; pump = _n_; logtstd = log(t) - 2.4564900; datalines; 5 94.320 1 1 15.720 2 5 62.880 1 14 125.760 1 3 5.240 2 19 31.440 1 1 1.048 2 1 1.048 2 4 2.096 2 22 10.480 2 ;

Each row denotes data for a single pump, and the variable logtstd contains the centered operation times.

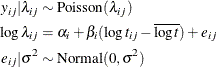

Letting ![]() denote the number of failures for the jth pump in the ith group, Draper (1996) considers the following hierarchical model for these data:

denote the number of failures for the jth pump in the ith group, Draper (1996) considers the following hierarchical model for these data:

The model specifies different intercepts and slopes for each group, and the random effect is a mechanism for accounting for overdispersion.

The corresponding PROC NLMIXED statements are as follows:

proc nlmixed data=pump; parms logsig 0 beta1 1 beta2 1 alpha1 1 alpha2 1; if (group = 1) then eta = alpha1 + beta1*logtstd + e; else eta = alpha2 + beta2*logtstd + e; lambda = exp(eta); model y ~ poisson(lambda); random e ~ normal(0,exp(2*logsig)) subject=pump; estimate 'alpha1-alpha2' alpha1-alpha2; estimate 'beta1-beta2' beta1-beta2; run;

The selected output is as follows.

The "Dimensions" table indicates that data for 10 pumps are used with one observation for each (Output 70.4.1).

Output 70.4.2: Iteration History for Poisson-Normal Model

| Iteration History | |||||

|---|---|---|---|---|---|

| Iteration | Calls | Negative Log Likelihood |

Difference | Maximum Gradient |

Slope |

| 1 | 4 | 30.6986932 | 2.162768 | 5.10725 | -91.6020 |

| 2 | 9 | 30.0255468 | 0.673146 | 2.76174 | -11.0489 |

| 3 | 12 | 29.7263250 | 0.299222 | 2.99040 | -2.36048 |

| 4 | 16 | 28.7390263 | 0.987299 | 2.07443 | -3.93678 |

| 5 | 18 | 28.3161933 | 0.422833 | 0.61253 | -0.63084 |

| 6 | 21 | 28.0956400 | 0.220553 | 0.46216 | -0.52684 |

| 7 | 24 | 28.0438024 | 0.051838 | 0.40505 | -0.10018 |

| 8 | 27 | 28.0357134 | 0.008089 | 0.13506 | -0.01875 |

| 9 | 30 | 28.0339250 | 0.001788 | 0.026279 | -0.00514 |

| 10 | 33 | 28.0338744 | 0.000051 | 0.004020 | -0.00012 |

| 11 | 36 | 28.0338727 | 1.681E-6 | 0.002864 | -5.09E-6 |

| 12 | 39 | 28.0338724 | 3.199E-7 | 0.000147 | -6.87E-7 |

| 13 | 42 | 28.0338724 | 2.532E-9 | 0.000017 | -5.75E-9 |

The "Iteration History" table indicates successful convergence in 13 iterations (Output 70.4.2).

The "Fit Statistics" table lists the final log likelihood and associated information criteria (Output 70.4.3).

Output 70.4.4: Parameter Estimates and Additional Estimates

| Parameter Estimates | ||||||||

|---|---|---|---|---|---|---|---|---|

| Parameter | Estimate | Standard Error |

DF | t Value | Pr > |t| | 95% Confidence Limits | Gradient | |

| logsig | -0.3161 | 0.3213 | 9 | -0.98 | 0.3508 | -1.0429 | 0.4107 | -0.00002 |

| beta1 | -0.4256 | 0.7473 | 9 | -0.57 | 0.5829 | -2.1162 | 1.2649 | -0.00002 |

| beta2 | 0.6097 | 0.3814 | 9 | 1.60 | 0.1443 | -0.2530 | 1.4725 | -1.61E-6 |

| alpha1 | 2.9644 | 1.3826 | 9 | 2.14 | 0.0606 | -0.1632 | 6.0921 | -5.25E-6 |

| alpha2 | 1.7992 | 0.5492 | 9 | 3.28 | 0.0096 | 0.5568 | 3.0415 | -5.73E-6 |

The "Parameter Estimates" and "Additional Estimates" tables list the maximum likelihood estimates for each of the parameters

and two differences (Output 70.4.4). The point estimates for the mean parameters agree fairly closely with the Bayesian posterior means reported by Draper (1996); however, the likelihood-based standard errors are roughly half the Bayesian posterior standard deviations. This is most

likely due to the fact that the Bayesian standard deviations account for the uncertainty in estimating ![]() , whereas the likelihood values plug in its estimated value. This downward bias can be corrected somewhat by using the

, whereas the likelihood values plug in its estimated value. This downward bias can be corrected somewhat by using the ![]() distribution shown here.

distribution shown here.