Copy Data from Hadoop

Using Copy Data from Hadoop

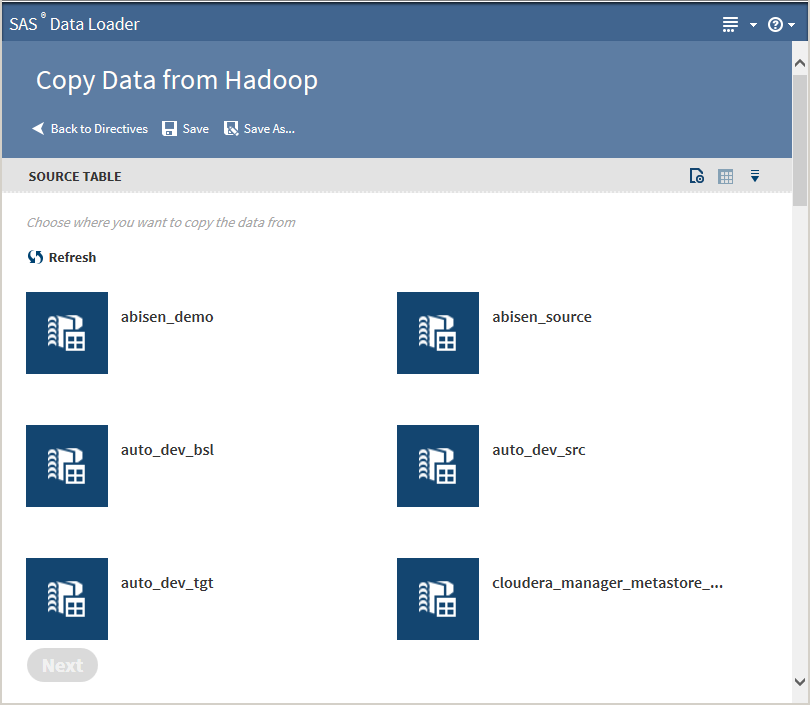

Follow these steps to

copy data from Hadoop into a database:

-

Clicking the Action menu

enables the following actions:

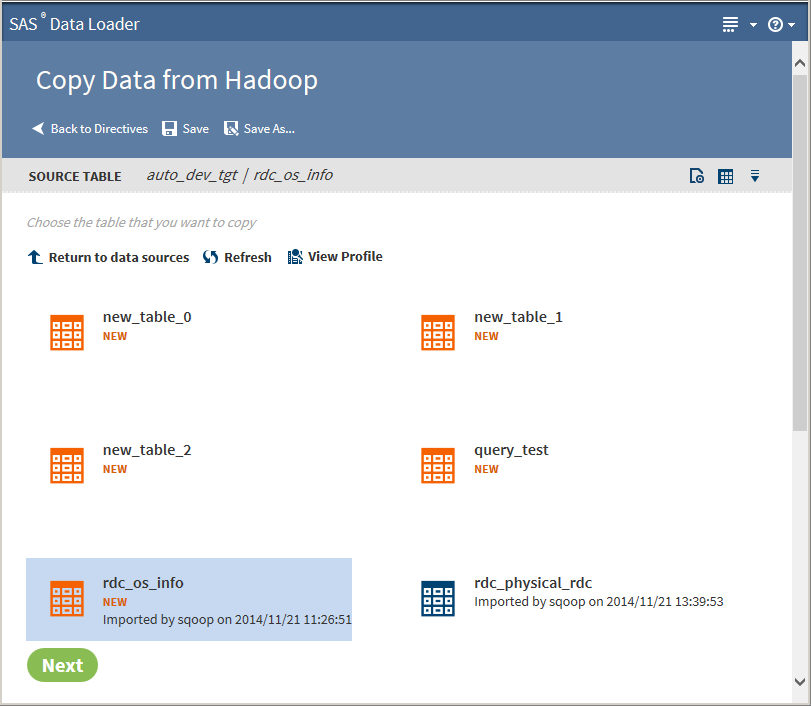

Openopens the current directive page.Table Viewerenables you to view sample data from a table. Select the table, and then click

enables the following actions:

Openopens the current directive page.Table Viewerenables you to view sample data from a table. Select the table, and then click to display the SAS Table Viewer.Advanced Optionsopens a dialog box that enables you to specify the maximum length for SAS columns. Entering a value here overrides the value specified in the Configuration options.Note: If the source table has String data types, the resulting SAS data set could be very large. The length of the target field in the SAS data set is determined by the value of this option.When table selection is complete, click Next. The Options page is displayed:

to display the SAS Table Viewer.Advanced Optionsopens a dialog box that enables you to specify the maximum length for SAS columns. Entering a value here overrides the value specified in the Configuration options.Note: If the source table has String data types, the resulting SAS data set could be very large. The length of the target field in the SAS data set is determined by the value of this option.When table selection is complete, click Next. The Options page is displayed: -

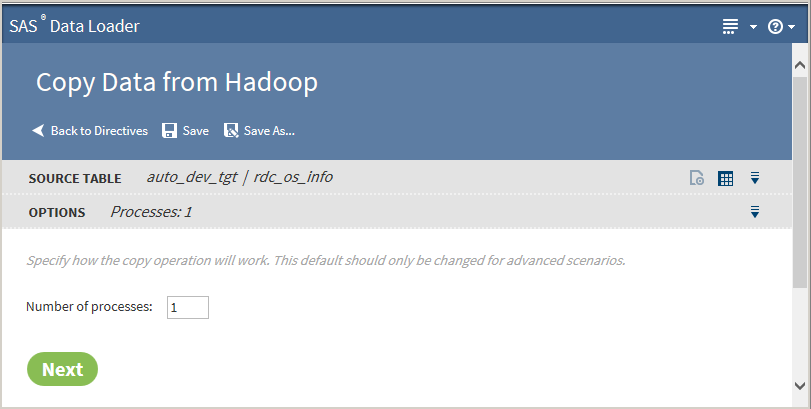

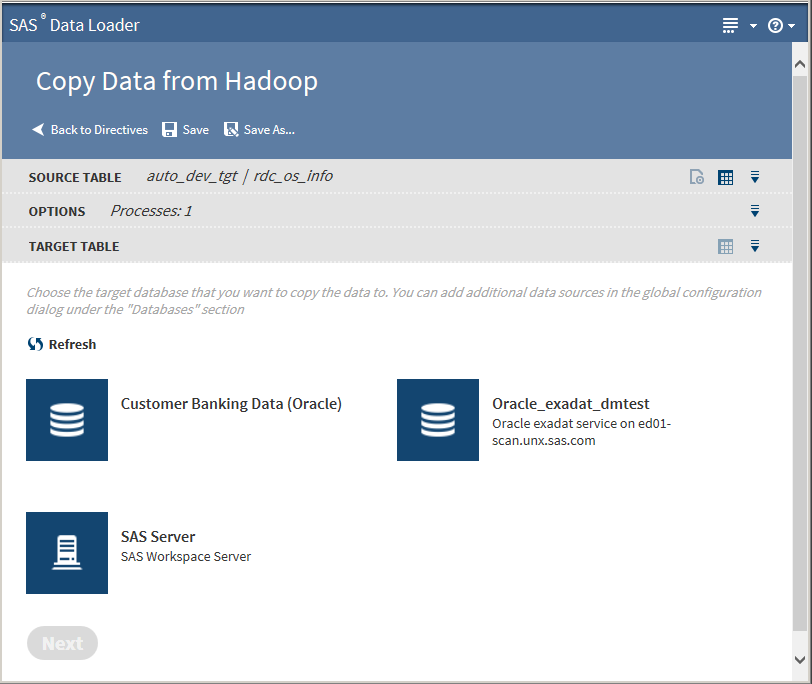

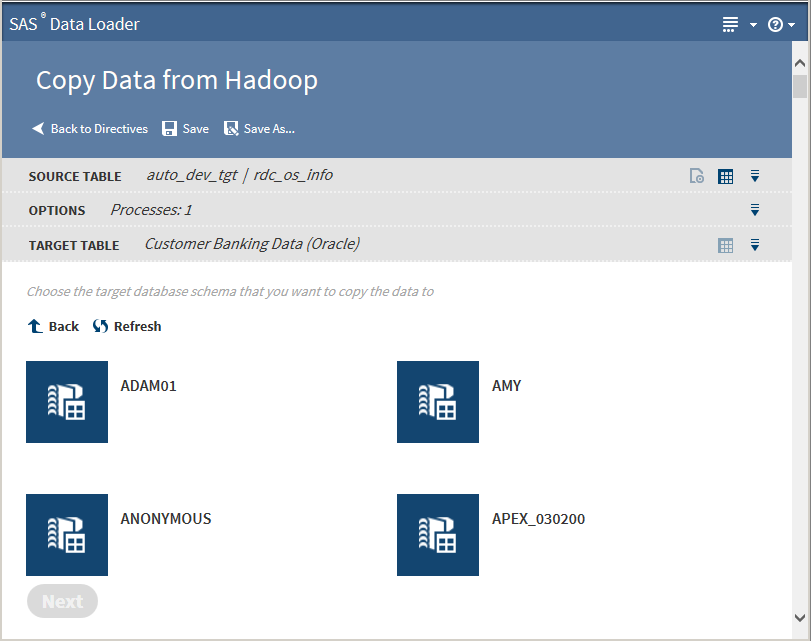

The value on the Options page should not be changed unless you have advanced knowledge of database operations.Note: Changing the number of processes to greater than one expands the number of processes and source data connections that are used to import data.Click Next. The Target Table page is displayed with target databases:

-

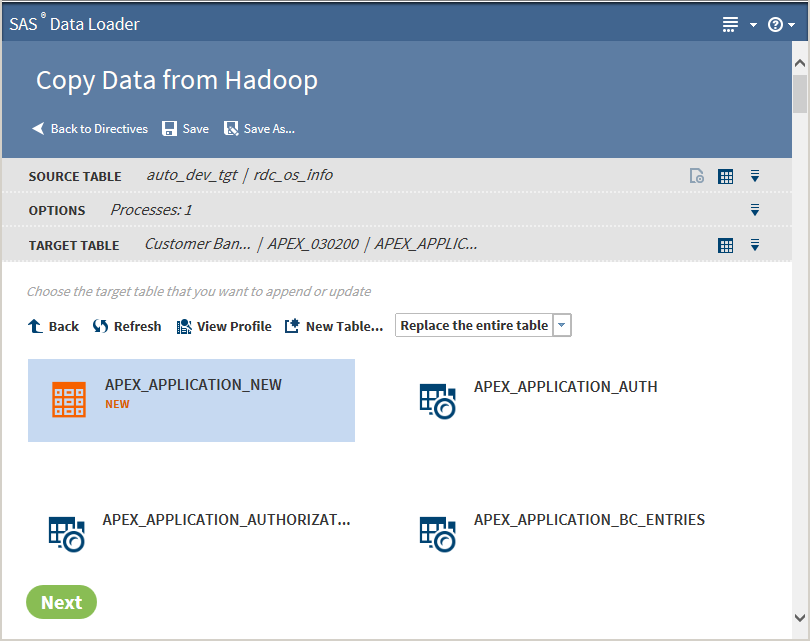

Tip

-

You can create a new table by clicking New Table

-

If a profile already exists for a table, PROFILED appears next the table icon. You can view the existing profile by selecting the table and clicking View Profile.

Clicking the Action menu enables the following actions:

Openopens the current directive page.Table Viewerenables you to view sample data from a table. Select the table, and then click

enables the following actions:

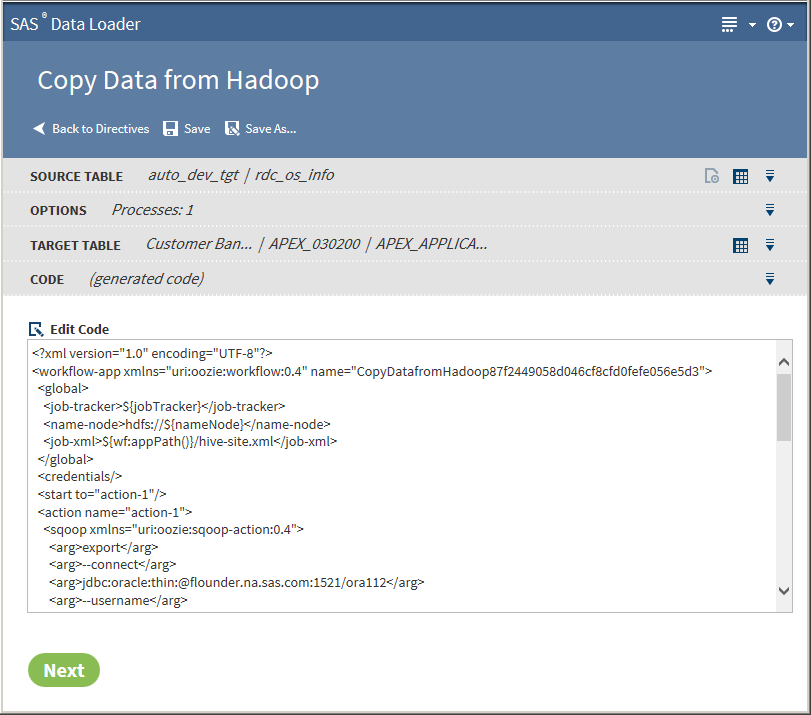

Openopens the current directive page.Table Viewerenables you to view sample data from a table. Select the table, and then click to display the SAS Table Viewer.Click Next. The Code page is displayed:

to display the SAS Table Viewer.Click Next. The Code page is displayed: -

-

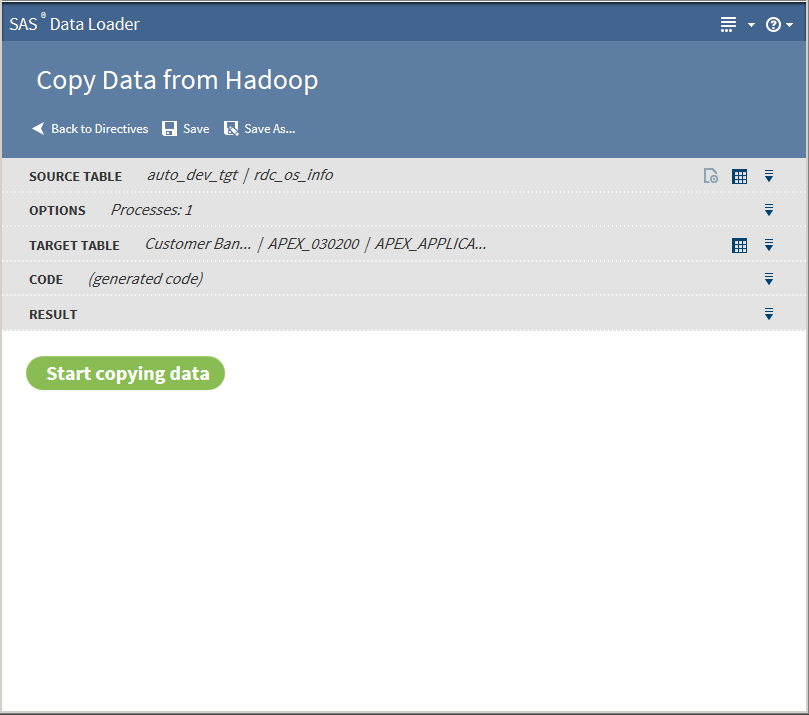

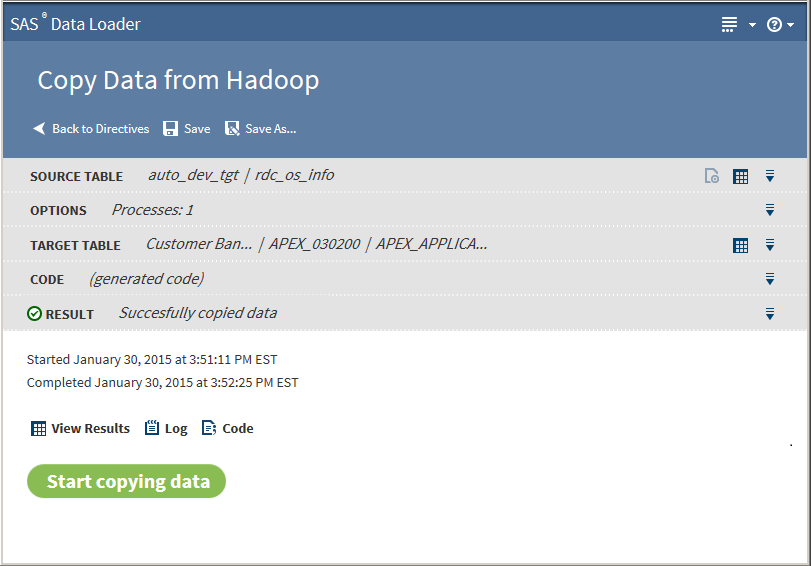

The following actions are available:View Resultsenables you to view the results of the copy process in the SAS Table Viewer.theLogdisplays the SAS log that is generated during the copy process.Codedisplays the SAS code that copies the data.

About Drivers and Connections

The Copy Data from Hadoop

directive uses JDBC drivers to connect from your client machine to

databases. The JDBC drivers on your client machine must be the same

as those that are installed on the Hadoop cluster. See Install JDBC Drivers and Add Database Connectionsfor more information.

Usage Notes

-

See the usage note Changing the Default Maximum Length for SAS Character Columns.

-

The hive.resultset.use.unique.column.names entry in the hive-site.xml file on the target Hadoop cluster must be set to False. If not, you might see an error message in the job history log. If you encounter an error, contact your Hadoop administrator.

-

Source tables with a large number of columns can cause Copy From Hadoop jobs to fail. The job runs until the target table reaches the maximum number of columns that are supported in the target database. To resolve the problem, reduce the number of columns that are selected for the target and run the job again.

-

If one or more varchar or string columns from a source Hadoop table contains more string data than the target database column, the Copy Data from Hadoop request times out. For example, a source Hadoop table might contain a string column named myString and a target Oracle table might contain a varchar(4000) column also named myString. If data in the Hadoop myString column has a length greater than 4000, then the copy request fails.

-

When copying a Hadoop table to a database, a column name specified in the array of struct in the Hadoop table is not copied to the database table. This happens because of how structs are mapped to varchars in Sqoop.

-

A copy from Hadoop is likely to fail if the name of a source column is also a reserved word in the target database.

-

When copying a Hadoop table to Oracle, a mixed-case schema name generates an error.

-

When copying a Hadoop table to Oracle, timestamp columns in Hadoop generate errors in Oracle. The Hive timestamp format differs from the Oracle timestamp format. To resolve this issue, change the column type in the Oracle target table from timestamp to varchar2.

-

If a copy fails due to errors in null value formats, edit your code so that null string and null non-string arguments contain the value

null:<arg>--input-null-string</arg>

<arg>null</arg>

<arg>--input-null-non-string</arg>

<arg>null</arg>

<arg>--input-null-string</arg>

<arg>null</arg>

<arg>--input-null-non-string</arg>

<arg>null</arg>

-

To copy Hadoop tables to Teradata, when the source contains a double-byte character set (DBCS) such as Chinese, follow these steps:

Copyright © SAS Institute Inc. All rights reserved.