| The NLPC Nonlinear Optimization Solver |

Conditions of Optimality

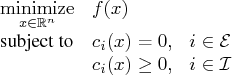

To facilitate discussion of the optimality conditions, we rewrite the general form of

nonlinear optimization problems from the section "Overview" by grouping the equality

constraints and inequality constraints. We also rewrite all the general nonlinear

inequality constraints and bound constraints in one form as ``![]() '' inequality

constraints. Thus we have the following formulation:

'' inequality

constraints. Thus we have the following formulation:

A point ![]() is feasible if it satisfies all the constraints

is feasible if it satisfies all the constraints ![]() and

and ![]() .

The feasible region

.

The feasible region ![]() consists of all the feasible points. In unconstrained

cases, the feasible region

consists of all the feasible points. In unconstrained

cases, the feasible region ![]() is the entire

is the entire ![]() space.

space.

A feasible point ![]() is a local solution of the problem if there exists a

neighborhood

is a local solution of the problem if there exists a

neighborhood ![]() of

of ![]() such that

such that

All the algorithms in the NLPC solver find a local solution of an optimization problem.

Unconstrained Optimization

The following conditions hold true for unconstrained optimization problems:- First-order necessary conditions: If

is a local solution and

is a local solution and  is continuously differentiable in some neighborhood of

is continuously differentiable in some neighborhood of  , then

, then

- Second-order necessary conditions: If

is a local solution and

is a local solution and

is twice continuously differentiable in some neighborhood of

is twice continuously differentiable in some neighborhood of  , then

, then

is positive semidefinite.

is positive semidefinite.

- Second-order sufficient conditions: If

is twice continuously

differentiable in some neighborhood of

is twice continuously

differentiable in some neighborhood of  ,

,  , and

, and

is positive definite, then

is positive definite, then  is a strict local solution.

is a strict local solution.

Constrained Optimization

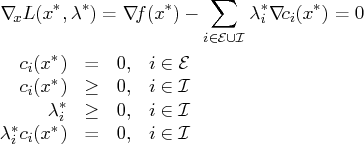

For constrained optimization problems, the Lagrangian function is defined as follows:We also need the following definition before we can state the first-order and second-order necessary conditions:

- Linear independence constraint qualification and regular point: A point

is said to satisfy the linear independence constraint qualification if the

gradients of active constraints

is said to satisfy the linear independence constraint qualification if the

gradients of active constraints

as a regular

point .

as a regular

point .

We now state the theorems that are essential in the analysis and design of algorithms for constrained optimization:

- First-order necessary conditions: Suppose that

is a local minimum

and also a regular point. If

is a local minimum

and also a regular point. If  and

and  , are

continuously differentiable, there exist Lagrange multipliers

, are

continuously differentiable, there exist Lagrange multipliers  such that the following conditions hold:

such that the following conditions hold:

- Second-order necessary conditions: Suppose

is a local minimum

and also a regular point. Let

is a local minimum

and also a regular point. Let  be the Lagrange multipliers that satisfy

the KKT conditions. If

be the Lagrange multipliers that satisfy

the KKT conditions. If  and

and  , are twice

continuously differentiable, the following conditions hold:

, are twice

continuously differentiable, the following conditions hold:

that satisfy

that satisfy

- Second-order sufficient conditions: Suppose there exist a point

and

some Lagrange multipliers

and

some Lagrange multipliers  such that the KKT conditions are satisfied. If

the conditions

such that the KKT conditions are satisfied. If

the conditions

that satisfy

that satisfy

is a strict local solution.

is a strict local solution.

Note that the set of all such ![]() 's forms the null space of the matrix

's forms the null space of the matrix

![]() . Hence we can search for strict

local solutions by numerically checking the Hessian of the Lagrangian function projected

onto the null space. For a rigorous treatment of the optimality conditions, see

Fletcher (1987) and Nocedal and Wright (1999).

. Hence we can search for strict

local solutions by numerically checking the Hessian of the Lagrangian function projected

onto the null space. For a rigorous treatment of the optimality conditions, see

Fletcher (1987) and Nocedal and Wright (1999).

Copyright © 2008 by SAS Institute Inc., Cary, NC, USA. All rights reserved.