Nonlinear Optimization Methods

The following table summarizes the options available in the NLO system.

Table 6.1: NLO options

|

Option |

Description |

|---|---|

|

Optimization Specifications |

|

|

TECHNIQUE= |

minimization technique |

|

UPDATE= |

update technique |

|

LINESEARCH= |

line-search method |

|

LSPRECISION= |

line-search precision |

|

HESCAL= |

type of Hessian scaling |

|

INHESSIAN= |

start for approximated Hessian |

|

RESTART= |

iteration number for update restart |

|

Termination Criteria Specifications |

|

|

MAXFUNC= |

maximum number of function calls |

|

MAXITER= |

maximum number of iterations |

|

MINITER= |

minimum number of iterations |

|

MAXTIME= |

upper limit seconds of CPU time |

|

ABSCONV= |

absolute function convergence criterion |

|

ABSFCONV= |

absolute function convergence criterion |

|

ABSGCONV= |

absolute gradient convergence criterion |

|

ABSXCONV= |

absolute parameter convergence criterion |

|

FCONV= |

relative function convergence criterion |

|

FCONV2= |

relative function convergence criterion |

|

GCONV= |

relative gradient convergence criterion |

|

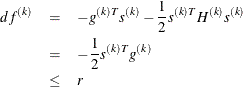

XCONV= |

relative parameter convergence criterion |

|

FSIZE= |

used in FCONV, GCONV criterion |

|

XSIZE= |

used in XCONV criterion |

|

Step Length Options |

|

|

DAMPSTEP= |

damped steps in line search |

|

MAXSTEP= |

maximum trust region radius |

|

INSTEP= |

initial trust region radius |

|

Printed Output Options |

|

|

PALL |

display (almost) all printed optimization-related output |

|

PHISTORY |

display optimization history |

|

PHISTPARMS |

display parameter estimates in each iteration |

|

PSHORT |

reduce some default optimization-related output |

|

PSUMMARY |

reduce most default optimization-related output |

|

NOPRINT |

suppress all printed optimization-related output |

|

Remote Monitoring Options |

|

|

SOCKET= |

specify the fileref for remote monitoring |

These options are described in alphabetical order.

![\[ \frac{\max _ j |\theta _ j^{(k)} - \theta _ j^{(k-1)}|}{\max (|\theta _ j^{(k)}|,|\theta _ j^{(k-1)}|,\mbox{XSIZE})} \leq r \]](images/etsug_nlomet0059.png)